It just works.

The moment you open it.

v2 ships with the model bundled. No setup. No subscription. No API key.

Plug in an NVIDIA GPU and it goes 3–15× faster — automatically. Linux + Windows. One click.

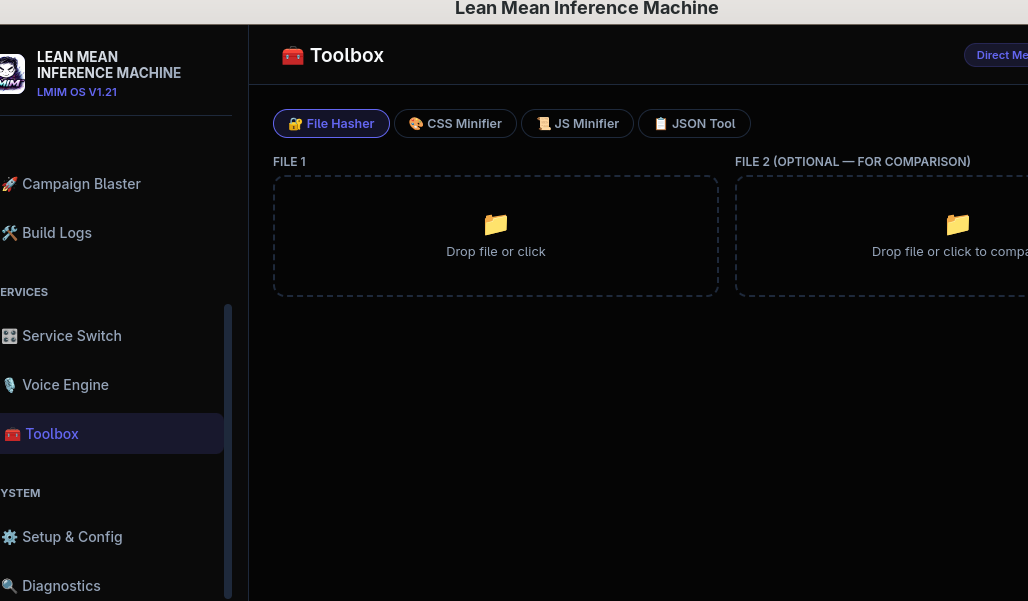

Developer Toolbox

Tools you reach for daily — built right into the app.

- SHA-1/256/384/512 hash checker

- CSS & JS minifier with size stats

- JSON validator + pretty-printer

-

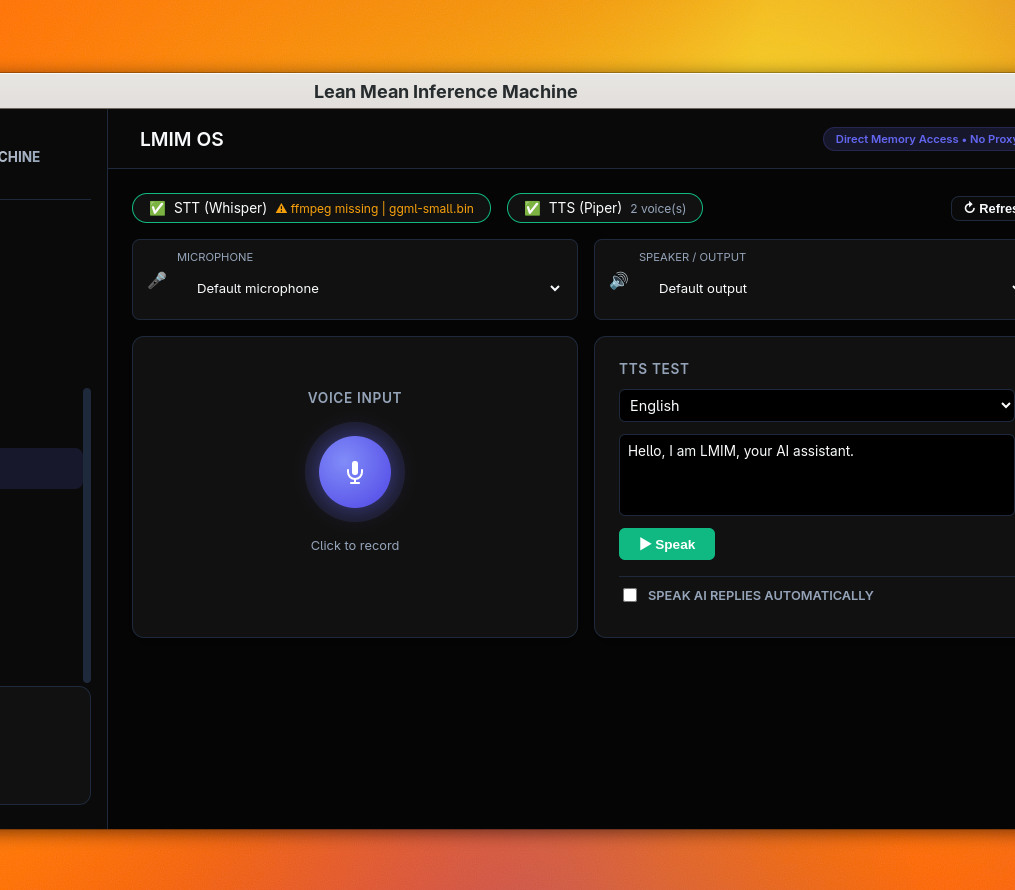

"Check the weather today."Searches the web, speaks the answer aloud. -

"Schedule Pedro Tuesday 3pm."Books the visual calendar, WhatsApps Pedro to confirm. -

"Build me a Flask dashboard."Writes it, tests it, iterates. Inspector validates before deploy.

CUDA Lightning

Auto-detects your NVIDIA GPU. 3–15× faster than CPU. No config.

- llama.cpp + whisper.cpp on CUDA 12.x

- RTX 20xx · 30xx · 40xx (compute 7.5+)

- Falls back to CPU if no GPU detected

- Qwen 3.5 bundled. Open the installer — model already inside. No download dance.

- ~120 tok/s on RTX 3060. ~80 tok/s on a GTX 1650 Ti. CPU fallback automatic.

- Cloud APIs are optional. OpenAI, Anthropic, Groq — toggle in settings, never required.